Computing resources and business opportunities provided by cloud vendors like AWS are endless. Amazon services can skyrocket your business growth and profitability. Yet, as any other system in the growing business, your AWS needs to be managed efficiently and optimized over time to improve productivity and cut expenses. Cloud computing has a dedicated set of practices for that goal, which is called cloud cost optimization.

With the growth of cloud-based businesses and cloud consumption boost, the demand for cloud cost optimization tools is increasing. According to The Cloud Cost Management and Optimization Market Report for 2022-2029 companies like Harness, ParkMyCloud (Turbonomic), Virtana Optimize, Nomad, Kaseya Unigma, CloudZero, Flexera as well as many others have grown much in the past few years. As the niche is growing and full of options, it may be difficult to choose the perfect AWS cloud cost optimization tool among all the available solutions.

The main question is whether native AWS tools meet your needs, or whether you need a third-party tool. In this article, we’re going to tell you about both options based on the capabilities that are considered critical for cloud cost optimization.

Key cloud cost optimization capabilities

So which capabilities are crucial for cloud cost optimization? Here is the list:

- tagging

- cost and utilization analytics

- resource scheduling

- and of course, alerts for specific events or anomalies that can cause waste, if not managed properly (e.g. idle and overprovisioned resources)

These capabilities are key to efficient cloud cost management and there are several vendors currently available on the market that more or less cover all of these – including native AWS cost optimization tools.

Native and third party cost optimization tools

There are many AWS cloud cost optimization tools to explore and allocate costs, as well as track and analyze cloud performance and resource utilization. While native tools seem like a natural choice, many companies find them insufficient for their business needs, or simply too cumbersome due to the need to pull data from multiple AWS tools to see the whole picture. In this case, businesses start looking for more robust and scalable all-in-one solutions.

Scheduling

Fact – turning off your non-production instances on weekends and during non-business hours (e.g. 6pm-9am) can save you up to 70% of their cost. If not managed properly, your resources will waste a lot of your budget. So you need to schedule your test and development environments by setting AWS Instance Scheduler that will stop your EC2 and Amazon RDS according to the provided timetable.

Skeddly is a valuable third-party tool designed to gain control over your expenses by efficiently managing the start and stop times of instances and virtual machines, helping you optimize resource usage and reduce costs. You can automate your backups and snapshots for instances, virtual machines, disks, and databases, while also removing outdated snapshots to minimize storage expenses. It also provides comprehensive IT automation capabilities, supporting a wide range of services like Amazon EC2, RDS and more.

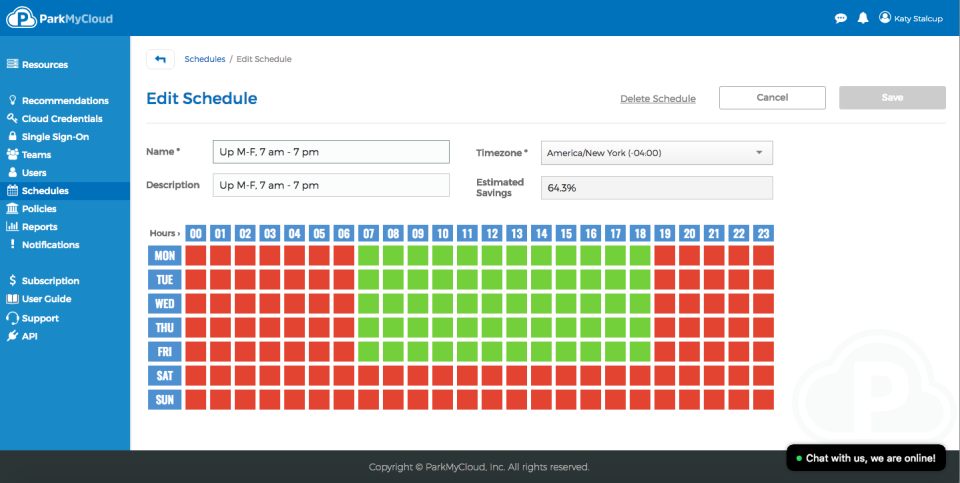

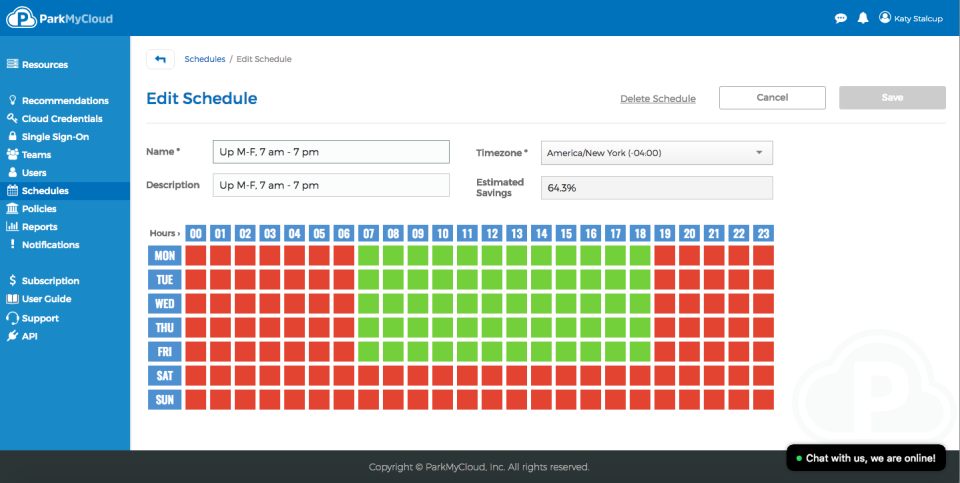

Lots of cloud users can tell a lot about their positive experience with third party platform that has recommended itself as one of the top cloud cost management tools – ParkMyCloud (Turbonomic, recently acquired by IBM). Its so-called Parking Schedule Management was created to add timetables and assign them to required resources in order to use and pay for resources only when you need them.

As ParkMyCloud is no longer supported, you might want to know that there is another solution of this kind called CloudAvocado. Its Scheduling capabilities are similar, and additionally, it enables tagging and utilization analytics in your environment to make cost management even more efficient.

AWS tagging tools

Tagging is required for the allocation of your cloud costs. Cloud cost allocation is an activity that allows you to connect your AWS bill to specific parts of your product, features or organizational units. The process is straightforward – you assign tags as metadata to all resources to get required reports, analytics and insights per required cost object. Even previously mentioned Scheduling can become quite challenging without proper tagging, as you won’t be able to identify your non-production instances.

Native AWS tools that can help you add and manage your resources’ tags are Tag Editor and AWS Config Managed Rules. Some third-party platforms can also provide you with this functionality, however, you need to be sure the tool can work with your resources across all your accounts. This enables proper tagging across all your regions, projects, etc. and results in accurate and consistent cost allocation.

CloudAvocado works well for tagging also and can also help you to track your tagging progress and display all untagged resources.

Cost and utilization analytics

Your workload needs to be revisioned on a regular basis to detect under- or overprovisioned resources. The former occurs when the capacity of an instance is lower than the demand. It can cause productivity issues within the apps you develop. The latter can cause your cost to be wasted as demand is lower than the instance capacity. It means you can potentially save your budget by replacing it with smaller and cheaper instance/s. The process of matching your capacity to the demand at the lowest possible cost without sacrificing reliability is called rightsizing – and it’s one of the most critical yet complicated tasks in cloud optimization. AWS provides the following tools to perform this task:

- AWS Cost Explorer allows you to see patterns in AWS spending over time, project future costs, identify areas that may require your attention. You also should use it for detecting and deleting idle EC2 instances, Amazon RDS instances, Load Balancers and unassociated Elastic IP addresses.

- AWS Cost and Usage Report provides you with data files that contain your detailed hourly AWS usage across accounts

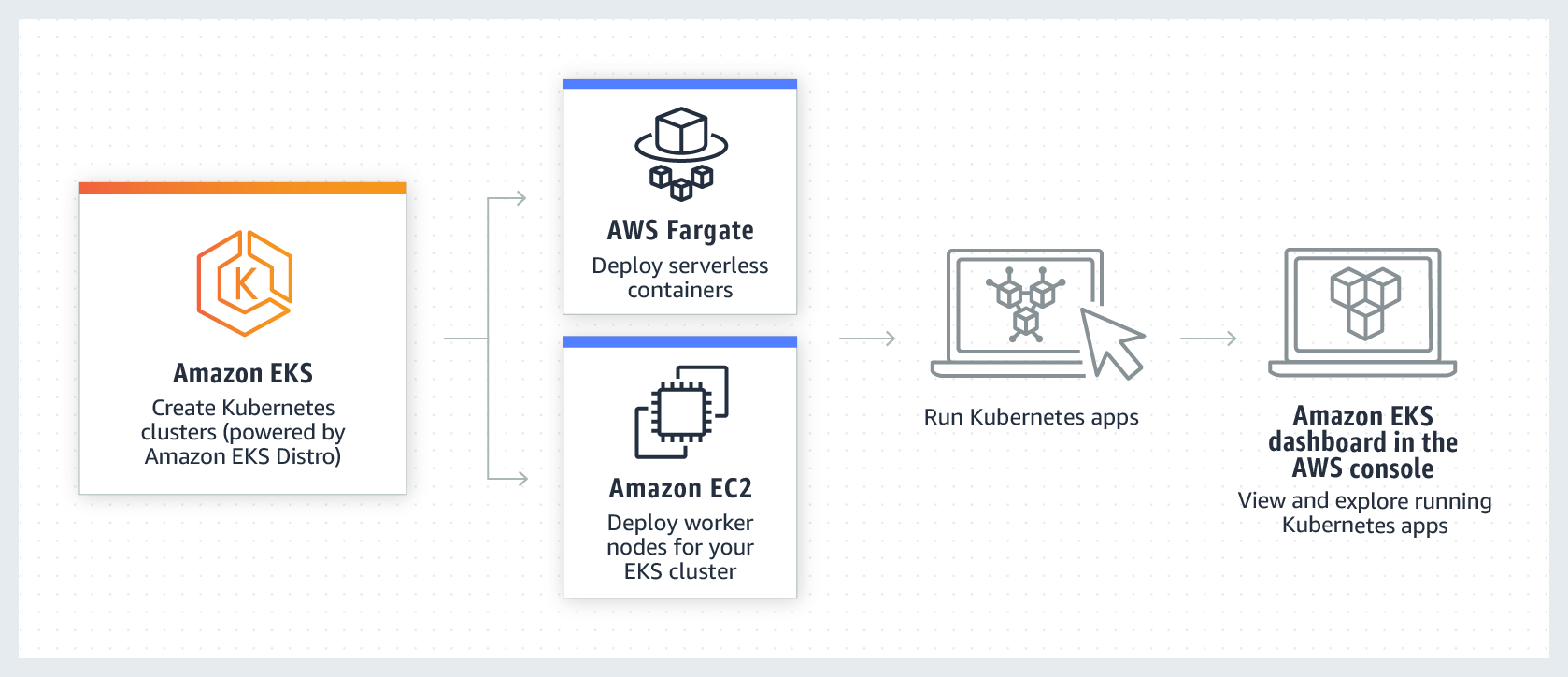

- AWS Compute Optimizer helps avoid overprovisioning and underprovisioning using utilization data for some AWS resources (EC2, EBS, ECS), services on AWS Fargate and AWS Lambda functions.

- Amazon CloudWatch collects and tracks metrics

- Amazon S3 Analytics – automated analysis and visualization of Amazon S3 storage patterns for cost-efficient tier management of your storage; you also can automate data lifecycle management with Amazon S3 Intelligent-Tiering and reduce Amazon S3 storage cost by identifying cost optimization opportunities with Amazon S3 Storage Lens.

In the case of third-party tools, some of them cover all the native AWS capabilities mentioned above in a single UI.

For example, CloudAvocado can easily calculate your current monthly expenses, projected monthly cost and provide you with hourly CPU utilization breakdown for any instance, cluster or autoscaling group. AWS cost and utilization analytics are presented in dashboards and reports to help you make data driven decisions on scaling your workload according to the demand.

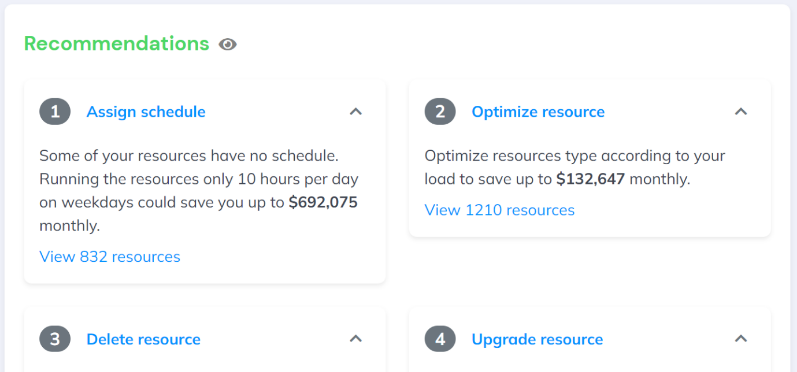

Recommendations and alerts for events

Cloud cost optimization tools analyze your cloud usage and spending patterns to identify potential cost-saving opportunities. By providing recommendations, these tools help you react proactively to reduce unnecessary expenses, optimize resource allocation, and eliminate wasteful spending. This can lead to significant cost savings over time.

AWS Trusted Advisor gathers potential areas for optimization for your workload and AWS Budgets triggers alerts when cost or usage exceeds (or is forecasted to exceed) a budgeted amount.

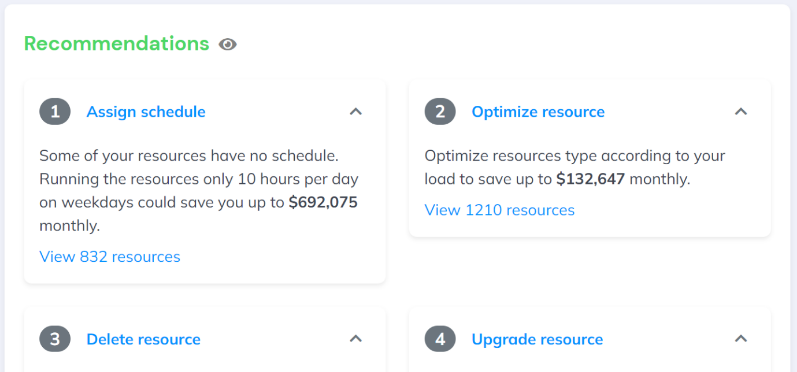

Among many other mentioned functionalities, CloudAvocado has built-in recommendations that highlight different cases of unoptimized usage: idle, unscheduled, untagged resources and resources that produce waste due to over-provisioning.

The verdict

Effective cloud cost optimization is essential for maximizing profitability. Since choosing the right tool can be challenging due to the variety of options available, it’s important to focus on the critical capabilities required for AWS cost optimization first. Look for cost allocation, resource scheduling, and alerts for identifying wasteful spending, and always remember that cost optimization is an ongoing process.

To get more information about AWS cloud cost optimization tools usage read our article cloud cost optimization checklist.

Or simply sign up for a free CloudAvocado trial to start your AWS cost optimization: get analytics on your AWS spendings, efficiency, and potential savings.